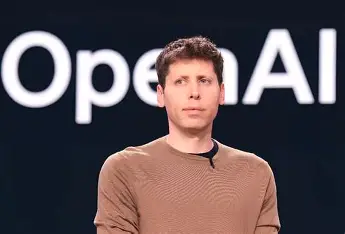

Trust in OpenAI’s ChatGPT has come under renewed scrutiny after OpenAI CEO Sam Altman publicly admitted that the artificial intelligence system is prone to “hallucinations,” a term used to describe instances where the AI generates factually incorrect or misleading content.

Speaking at a leading technology conference, Altman stated, “You should not trust ChatGPT outputs blindly. Unlike a calculator or database, these systems sometimes make things up.” The comment has triggered widespread concern among users and experts, particularly as AI tools like ChatGPT continue to be integrated into daily business operations, education, and healthcare systems.

Hallucinations in AI refer to outputs that are not grounded in reality, despite appearing confident and well-formatted. While the phenomenon has been known to researchers and developers for years, Altman’s frank admission brings it to the forefront of public discussion, raising questions about the dependability of generative AI technologies.

In contrast to conventional software, which performs predictable and verifiable operations, generative AI models operate probabilistically, drawing from vast datasets to generate responses. This often leads to creative but inaccurate answers, especially when handling complex or ambiguous queries.

Following Altman’s remarks, several AI experts and ethicists called for increased transparency and accountability in AI deployment. “This should be a turning point,” said Dr. Alicia Morgan, a digital ethics researcher at Stanford University. “We need clearer communication to users about where and when these systems can go wrong.”

The admission is also expected to influence ongoing regulatory discussions. In both the European Union and the United States, policymakers are crafting legislation aimed at improving oversight over AI systems, including requirements for explainability, data privacy, and safety standards.

Despite these limitations, ChatGPT continues to see widespread usage, from customer service automation and content creation to virtual assistance and academic support. Industry analysts, however, caution businesses against over-reliance on generative AI without appropriate human supervision.

“AI models like ChatGPT are powerful, but they’re still fallible,” said Rahul Mehta, a technology analyst at TechScope Research. “Companies need to implement safeguards to prevent the spread of false or biased information.”

Altman’s statement serves as a reminder that while generative AI has the potential to transform industries, it remains a tool that must be used with caution. Experts emphasize the importance of educating users about AI limitations and ensuring that developers are transparent about known flaws.

As the debate around AI trustworthiness intensifies, Altman’s acknowledgment may prompt both developers and users to revisit the assumptions underpinning the use of generative models in critical domains.